B2B SaaS growth has long been built on a simple principle: rank in Google, earn traffic, convert pipeline.

That era isn't over, but it now has a competitor that runs on completely different logic.

Answer engines like ChatGPT, Perplexity and Google's AI Overviews don't return ranked lists of links. They synthesize answers.

In this new system, what shapes those answers isn't determined solely by domain authority or page-one rankings — a change that has driven the emergence of Answer Engine Optimization (AEO), a new approach to AI search optimization.

It's citations.

If you're still optimizing exclusively for rankings, you’re missing a structural shift. In AI search, visibility is no longer determined by ranking position. It is earned through consistent citations across the third-party sources that shape answer engine outputs.

PageRank and answer engines are solving different problems

Google's PageRank was built on a simple premise: links are votes. This logic defined search for decades, but it was designed for a specific job — returning a ranked list of documents for a human to evaluate. The search engine points you to the shelf; you decide what to read.

Answer engines don't work that way. They don't point. They synthesize.

Ask an AI assistant, "What's the best partner management platform for mid-market SaaS?" and it won't return a list of links. It will generate a direct answer by drawing on multiple sources in real time.

What often determines inclusion is citation consistency across trusted, third-party sources. Not rankings alone.

This is why a company can hold the number-one spot on Google and still be absent from AI-generated answers in the same category.

The ranking game and the citation game are played on different boards.

For a deeper look at how this shift is playing out in practice, this conversation with Webflow’s Guy Yalif on the Get it Together podcast breaks down how teams are adapting SEO foundations for AEO.

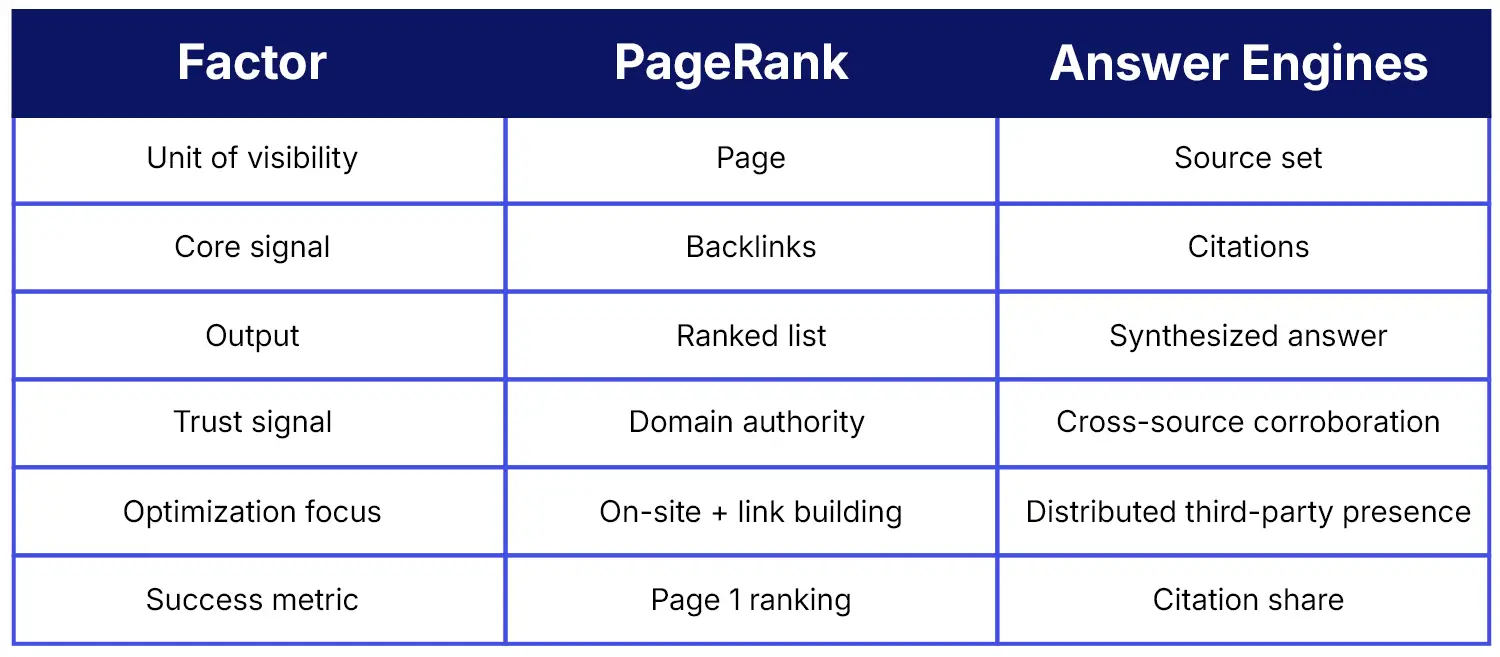

PageRank vs. answer engine logic

The table below captures the structural differences that require a different optimization strategy:

You might also like: How AEO is like early SEO — and why first principles will win.

How AI models decide what to trust

Why do citations matter so much in AI search? Because answer engines look for patterns of corroboration across the web.

When an AI system processes a question about software pricing, capabilities or vendor comparisons, it draws on patterns reinforced across many sources. What surfaces is driven by repetition across independent sources, not a single authoritative source.

This means AI answers are shaped by the ecosystem of content that exists around a product — not just what the company publishes about itself.

Signals that influence how an AI model characterizes a product in response to a relevant inquiry include:

- Review sites (e.g. G2, Capterra)

- Analyst coverage

- Partner directories

- Integration listings

- Comparison articles

- Affiliate and third-party editorial content

Many discussions of AEO focus on on-site tactics like schema markup, structured content and page optimization. These things are important, of course, but they primarily address the owned-content layer of the citation problem.

We also need to consider the deeper layer of third-party citation surface: a distributed network of external sources that reference and evaluate a product.

Companies with strong owned content but weak third-party coverage often lose visibility to competitors with broader ecosystem representation — even when their own content is technically stronger.

AI models tend to prioritize corroborated external signals over self-published claims — a shift driven by the need for independent evidence that brand-owned content alone cannot provide. Semrush analysis of AI citation patterns shows answer engines rely more heavily on third-party sources than brand-owned pages when building responses.

Example: How AI answers actually get built

To make this concrete, consider a single prompt and observe how different answer engines surface information:

“What is the best partner management software for SaaS companies?”

Across tools like ChatGPT, Perplexity and Google AI Overviews, the same pattern emerges. Answers aren’t built from a single authoritative source. They’re synthesized from clusters of third-party content — review platforms, comparison articles, analyst commentary and integration listings.

What PartnerStack’s data reveals

PartnerStack’s research into AI search visibility shows a clear pattern: a significant share of AI citations for top-performing vendors come from ecosystem-generated content, not brand-owned assets.

Specifically, insights from a recent PartnerStack Research Lab report, based on analysis of AI citation patterns across top-performing vendors in the PartnerStack Network, show:

- 43% of citations appearing in AI-generated answers about top vendors originate from partner ecosystem sources

- Within that share, 21% is driven by active partner activity

- The remaining 22% represents existing ecosystem content that is not yet contributing to citation visibility

That’s not a marginal signal. It shifts where AI visibility actually comes from. A significant share of AI-generated visibility is shaped outside owned content.

This shift reframes the role of partner programs. Historically, partnerships have been measured primarily on direct revenue attribution. Did this affiliate drive a conversion? Did this reseller close a deal?

That still matters. But the PartnerStack data points to a second layer of value: every partner-generated asset — whether a comparison article, integration listing or review — contributes to the external citation surface that AI models use when constructing category answers.

See also: AEO for partnerships: How to shift your content strategy to rank in LLMs.

Partner ecosystems as citation infrastructure

If third-party citations are the new currency of AI search, then partner ecosystems are one of the most effective ways to generate them at scale.

The advantage isn’t just volume. It’s structural diversity.

A brand’s internal team is limited to its own perspective. In contrast, partner ecosystems create a distributed layer of content across different formats — like reviews, comparisons, tutorials, integration documentation and use-case narratives. This matters because AI-generated answers prioritize corroborated signals. The broader and more varied the coverage, the more likely a product is to appear in high-intent queries.

Partner-generated content often reflects real-world deployment experience, covering edge cases, integration notes and use-case contexts that owned content rarely captures. That expands a product’s presence into query spaces that a brand’s own content structurally cannot easily reach.

How to think about citation surfaces

This means shifting from traditional content marketing to citation surface management. Instead of “what are we publishing?” the question becomes: “where are we being referenced, by whom, and in what context?” — in other words, how often do brand mentions in AI search actually up?

This breaks down into four areas:

1. Identify high-intent query clusters

AI visibility clusters around recurring query patterns like:

- Category intent: "Best [category] software," e.g., "best partner management software for SaaS"

- Use-case intent: "Top tools for [use case]," e.g., "top affiliate platforms for mid-market"

- Competitive intent: "[Competitor] alternatives," e.g., "PartnerStack alternatives"

- Comparison intent: "[Product A] vs [Product B]" comparison queries

2. Map citation gaps across AI surfaces

Assess where you appear in AI-generated answers, and where competitors show up instead. This means reviewing outputs across ChatGPT, Perplexity and Google AI Overviews to identify which third-party sources dominate visibility across key query clusters.

3. Activate your partner ecosystem by content role

Different partner types contribute different forms of citation coverage:

- Agency partners: deployment guides, comparison articles and client case studies

- Affiliates: listicles, "best of" roundups and category landing pages

- Integration partners: integration listings and directories

- Review partners, customers and advocates: reviews on third-party platforms

4. Track citation share per prompt cluster

Citation visibility should be measured at the query cluster level rather than the page or domain level.

- Assign each query cluster a baseline citation share score

- Re-run prompts monthly across ChatGPT, Perplexity and Google AI Overviews

- Measure whether partner-published content appears as citations

- Adjust partner enablement priorities based on where citation gaps persist

Prompt cluster examples: understanding citation surfaces

For a vendor like PartnerStack, the relevant prompt clusters to monitor — and where citations typically originate — include:

- Best partner management software: Review aggregators (G2, Capterra), affiliate comparison blogs, SaaStr coverage

- Top affiliate platforms for SaaS: Affiliate marketing publications, G2 Grid, integration directory listings

- PartnerStack alternatives: G2 comparison pages, TrustRadius reviews, SaaS industry blogs, competitor affiliate content

- How to manage B2B partnerships: Agency partner blog posts, HubSpot ecosystem content, niche SaaS publications

- Partner ecosystem software reviews: Review aggregators (G2, Capterra, TrustRadius), analyst summaries

Running these prompt clusters monthly across ChatGPT, Perplexity and Google AI Overviews gives you a concrete view of where your citation presence is strong and where it needs investment.

Operationalizing the ecosystem

In practice, coordinating citation coverage across hundreds of partners, publishers and platforms can quickly become an operational challenge.

PartnerStack’s Content Marketplace helps teams manage this complexity by bridging the gap between identifying the specific third-party domains driving AI answers in their category and activating third-party content that increases citation coverage across key AI surfaces.

From technical SEO to citation architecture

Most advice about optimizing for AI search is tactical:

- Write in question-and-answer format

- Use structured data

- Aim for featured snippets

- Keep paragraphs concise

None of that is necessarily wrong. The issue is that it addresses the symptom rather than the underlying architecture that determines citation coverage. This is what an effective AI search optimization strategy now needs to account for.

The companies that will win AI search over the next three to five years aren't going to be those with the best individual content pieces. They'll be the ones who have built the most comprehensive, credible, distributed citation networks.

That means owned content optimized for AI retrieval.

It means third-party review coverage that is broad and authentic.

It means partner ecosystems that are actively generating content across the full range of surfaces where AI answers are built.

The 43% citation figure from PartnerStack's research can serve as a benchmark here. In PartnerStack’s dataset, 43% of AI citations across top-performing vendors within the PartnerStack network originate from partner ecosystem content. While directional, this suggests that companies without developed partnership programs are structurally disadvantaged in the channels that increasingly shape early-stage B2B buying discovery.

These systems require different strategies, different investments and a different understanding of what it means to be visible.

For B2B SaaS companies still optimizing exclusively for page one, the more urgent question is simple: where are you showing up when a buyer uses an AI search?

The ranking era isn't over. But the citation era has arrived alongside it.

Explore PartnerStack’s Content Marketplace to start activating third-party citations at scale.